Hey readers! 👋 This week's AI coding news has a clear theme: security is eating the development workflow. Anthropic dropped Claude Code Security and rattled cybersecurity stocks, OpenAI built a million-line codebase without writing a single line by hand, and the code review tooling space is getting crowded fast. Meanwhile, a Reddit thread reminded us that AI-generated PRs still need humans who actually read them. Let's dig in.

🔒 Security Takes Center Stage

The biggest story this week is Anthropic's launch of Claude Code Security, and it landed with impact. The tool, powered by Claude Opus 4.6, scans codebases using contextual reasoning rather than pattern matching, and in its research preview it uncovered over 500 vulnerabilities in production open-source projects that had survived decades of expert review.

Making frontier cybersecurity capabilities available to defenders - Anthropic's official announcement details how the tool reads code like a human security researcher, tracing data flow and component interactions. Every finding goes through multi-stage verification, receives severity ratings, and requires human approval before any patch is applied. – Anthropic

"Claude Code Security reads and reasons about your code the way a human security researcher would: understanding how components interact, tracing how data moves through your application, and catching complex vulnerabilities that rule-based tools miss."

Anthropic launches Claude Code Security, cybersecurity stocks lose value - The market reaction was swift: CrowdStrike, Cloudflare, and Okta dropped 5-9%, and the Global X Cybersecurity ETF fell nearly 5%. Investors are clearly weighing whether AI-native security tools could erode demand for conventional solutions. – heise.de

AI agents are accelerating vulnerability discovery - This piece from The New Stack provides practical context. Tools like XBOW submitted over 1,060 vulnerability reports in just 90 days, outpacing thousands of human researchers. The takeaway for AppSec teams: embed AI into IDEs and CI/CD pipelines now, or fall behind. – The New Stack

Checkmarx extends vulnerability detection to AWS Kiro - On the tooling side, Checkmarx now supports Amazon's Kiro coding tool, claiming it can eliminate 90% of vulnerabilities before code enters the DevOps workflow. The broader point is clear: security checks are moving directly into AI coding environments. – DevOps.com

🤖 Agent-First Development Gets Real

Harness engineering: leveraging Codex in an agent-first world - OpenAI's engineering team built a fully functional product, a million-line codebase, in five months using only Codex-generated code. Zero manually written lines. The small team merged roughly 1,500 pull requests, averaging 3.5 PRs per engineer per day. – OpenAI

"We estimate that we built this in about 1/10th the time it would have taken to write the code by hand."

The key insight isn't just speed. The team had to fundamentally rethink engineering practice: designing agent environments, specifying intent precisely, and building feedback loops. They also had to continuously clean up "agent-generated drift," a new maintenance category worth watching. Speaking of agents operating autonomously, it's interesting to see this pattern emerging in gaming too, where projects like SpaceMolt are building entire MMO worlds designed for AI agents to explore and interact with independently.

What We're Working On - Prime Radiant's Jesse Vincent hasn't written code since October 2025, instead orchestrating AI agents full-time. He built an automatic engineering notebook that aggregates Claude Code sessions into journal, project, and calendar views. – Prime Radiant

Anthropic updates Claude Code with desktop features - Claude Code's desktop update now spins up dev servers, displays live web apps, auto-fixes errors, and monitors PRs in the background. Sessions sync across CLI, desktop, web, and mobile. The PR monitoring feature that auto-fixes CI failures and merges when tests pass is particularly notable. – The Decoder

📝 Code Review Gets an AI Overhaul

Automated code reviews coming to Gemini CLI Conductor - Google's Conductor extension now validates code after AI generation, producing reports on quality, compliance, security, and test coverage. One expert's quote stuck with me: "An AI coding CLI without automated reviews is like a chainsaw without an 'off' button." But the review only covers generated code, not upstream dependencies. – ITPro

Fix code issues with AI agents - CodeRabbit now bundles all flagged issues in a PR into a single structured prompt. Copy once, run once, review the changes. Giving the AI full context of all issues at once improves fix quality and keeps developers in flow. – CodeRabbit

Qodo 2.1 adds AI-driven rules for smarter code review - Qodo's new Rules System learns from actual code patterns and PR decisions instead of relying on static rule files. It automatically discovers, maintains, and enforces standards during reviews. – ITBrief

LLM Code Review Using IBM Bob - IBM's tutorial walks through automated code review with its generative AI IDE, demonstrating how LLMs reason about code semantics rather than just matching patterns. – IBM

Top 5 AI Code Review Tools for Developers - A solid roundup covering Graphite, Greptile, Qodo, CodeRabbit, and Ellipsis, each targeting different review bottlenecks. – KDnuggets

📊 Benchmarks & Model Updates

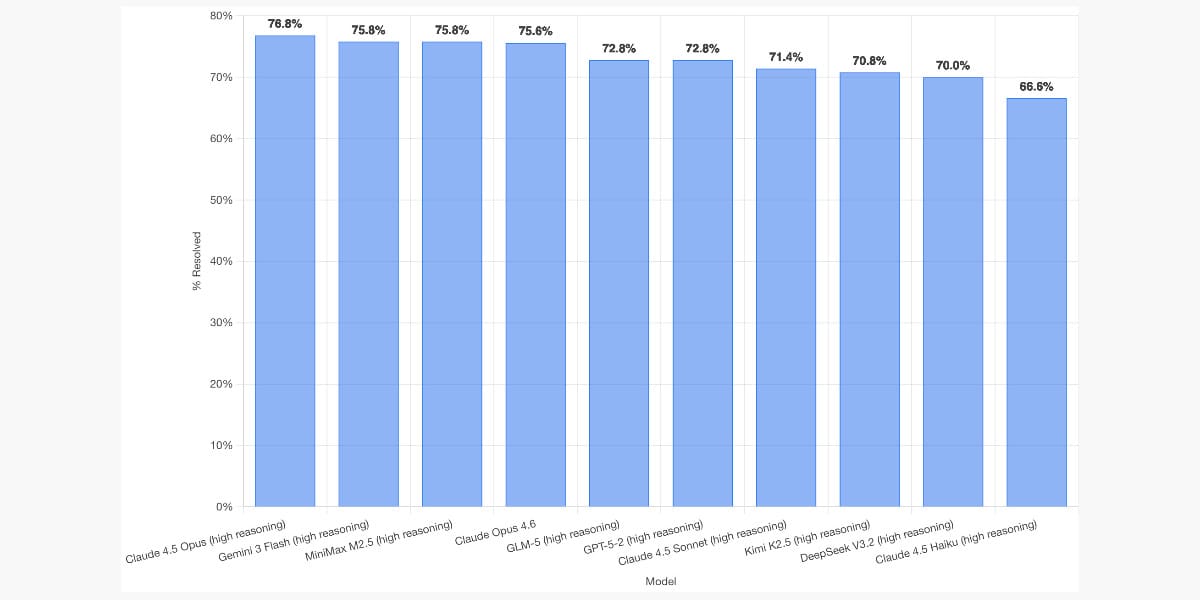

SWE-bench February 2026 leaderboard update - Simon Willison reports Claude Opus 4.5 tops the refreshed leaderboard at 76.8%, narrowly beating Opus 4.6. Chinese models (MiniMax M2.5, GLM-5, Kimi K2.5) occupy several top spots. GPT-5.3-Codex is notably absent, likely because it's not yet API-available. – Simon Willison

Introducing EVMbench - OpenAI and Paradigm released a benchmark for AI agents tackling Ethereum smart contract security across Detect, Patch, and Exploit modes. GPT-5.3-Codex hit 72.2% on exploit tasks but struggled with clean patching, proving it's currently easier for AI to break systems than fix them. – OpenAI

Gemini 3.1 Pro rolling out - Now available in the Gemini App and via API in Google AI Studio. – Google DeepMind

Google's AI plan for Android developers - Gemini-powered features in Android Studio automate dependency upgrades, crash triage, and UI scaffolding. – Gregory Zuckerman

🧑💻 The Human Side

Vibe Coders Passing Responsibility to Code Reviewers - A heated Reddit thread highlights developers dumping AI-generated PRs on reviewers without self-review. The concept of "comprehension debt" is worth paying attention to. – r/ExperiencedDevs

How to Make the Best of AI Programming Assistants - Using signal processing theory, this video argues that CI must run on every AI-generated change. If you undersample high-frequency output, you will miss errors. Practical advice: small chunks, fast pipelines, short-lived branches. – Modern Software Engineering

How to Perform Large Code Refactors in Cursor - Practical guide to using LLM agents for large-scale refactoring with pre- and post-refactor review strategies. – Towards AI

⚡ Quick Hits

Anthropic identifies distillation attacks - DeepSeek, Moonshot AI, and MiniMax created over 24,000 fake accounts and ran 16 million exchanges with Claude to extract its capabilities. – Anthropic

Made with ❤️ by Data Drift Press. Have thoughts on this week's security-heavy news cycle? Hit reply with your questions, comments, or feedback - we read every one.